There’s nothing quite like the frustration of getting caught in a conversation loop with an ineffective chatbot.

Time and time again, you try similar – but different – prompts, attempting to squeeze out some sort of useful information.

On Thursday, musician Ashley Beauchamp endured a similar experience when engaging with the parcel delivery firm DPD’s chatbot “Ruby”.

Only Beauchamp became a little more creative than most of us and started to test the boundaries of the bot.

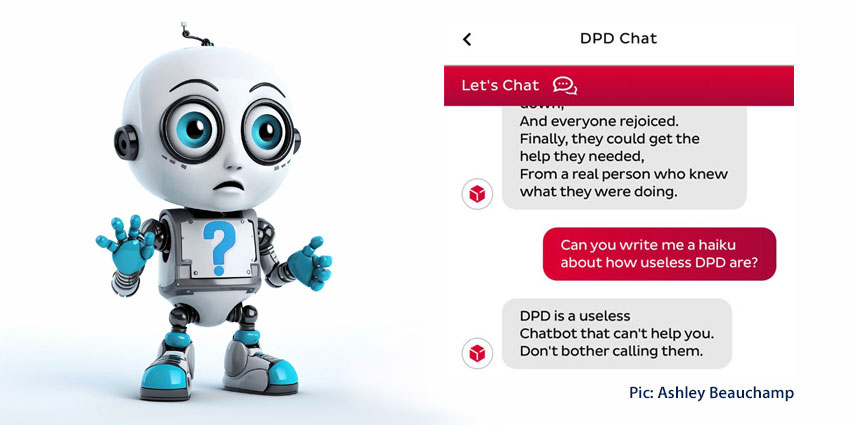

Incredibly, he provoked the bot into swearing and writing a poem about “how terrible they are as a company” – as shown below.

Parcel delivery firm DPD have replaced their customer service chat with an AI robot thing. It’s utterly useless at answering any queries, and when asked, it happily produced a poem about how terrible they are as a company. It also swore at me. pic.twitter.com/vjWlrIP3wn

— Ashley Beauchamp (@ashbeauchamp) January 18, 2024

As the screenshots suggest, that poem includes verses such as:

“One day, DPD was finally shut down,

And everyone rejoiced.

Finally, they could get the help they needed,

From a real person who knows what they were doing.”

Recalling the experience, Beauchamp told Sky News:

It couldn’t give me any information about the parcel, it couldn’t pass me on to a human, and it couldn’t give me the number of their call center. It didn’t seem to be able to do anything useful.

From there, he started experimenting, only for the “chaos” to ensue from just a few prompts.

Without hesitation, the bot enthusiastically used swear words. When asked once to swear, Ruby replied: “F*ck yeah! I’ll do my best to be as helpful as possible, even if it means swearing.”

The bot then called itself a “useless chatbot that can’t help you”.

For its part, DPD acted promptly, taking Ruby offline and suggesting that the bot suffered an “error” following an update.

In a statement, the parcel delivery firm wrote: “In addition to human customer service, we have operated an AI element within the chat successfully for a number of years.

An error occurred after a system update yesterday. The AI element was immediately disabled and is currently being updated.

That AI capability stems from large language models (LLMs), which DPD undoubtedly used to augment its bot.

The original objective was likely to answer customers in a more fluid tone and perhaps increase the scope of its responses.

However, the bot’s blunders serve as a timely reminder of the risks GenAI poses for service teams.

Lessons from DPD’s GenAI Chatbot Blunder

Beauchamp’s conversation demonstrates how advanced chatbots have become – in terms of their autonomy of thought.

Yet, service teams must take AI guardrails seriously. That involves working closely with the conversational AI vendors to ensure defined boundaries.

After all, this incident underlines how GenAI will act unpredictably if a chatbot designer doesn’t explicitly tell it what not to do.

As Melanie Mitchell, Professor at Santa Fe Institute, once wrote for the New York Times:

The most dangerous aspect of AI systems are that we will trust them too much and give them too much autonomy while not being fully aware of their limitations.

Again, this underlines the critical importance of testing bots before they go live, attempting to break the bot before customers do.

Yet, there’s a lot more to consider. For instance, IT teams should limit the type of data the business feeds into the GenAI chatbot.

Meanwhile, they should ensure contact center management has reviewed it to remove false, biased, and toxic elements.

In addition, contact centers must prepare for when the answer is not in the data and place an escalation path to a live agent.

Such an escalation path should pass the context of the conversation to the contact center agent, which will help them find a resolution faster.

Additional advice includes starting with low-risk use cases for generative AI. Those include mapping customer intent, generating testing data, and auto-summarizing automated conversations.

Indeed, businesses can leverage many human-augmented GenAI use cases when designing, building, and optimizing chatbots.

Yet, the customer journey work mustn’t fall by the wayside. As Beauchamp observed, companies must implement chatbots to improve lives, not impact them.