I’m still thinking about the CEO’s message when the next alert appears.

New system update.

Priority: High.

I open the briefing.

It’s from the AI personalisation team.

They’ve been working on something new.

Something they’re very excited about.

Emotion detection is now fully integrated into the CX platform.

Not just sentiment.

Emotion.

The system can now detect:

- Stress

- Frustration

- Anxiety

- Confusion

- Hesitation

In real time.

Across voice, chat, and video.

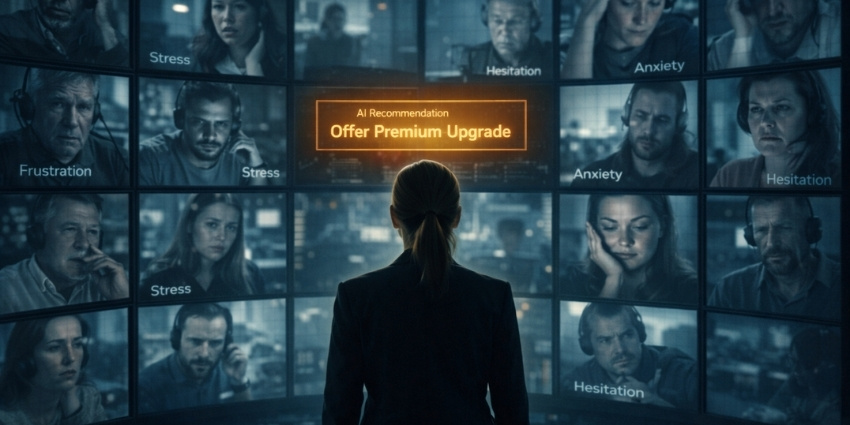

The dashboard shows a new metric: Emotional State Index.

Every customer interaction now includes a live emotional profile.

I ask the obvious question.

“What do we do with this?”

The product lead answers immediately.

“We adapt the journey in real time. If a customer sounds stressed, we simplify the process. If they sound confident, we offer upgrades. If they sound uncertain, we guide their decision.”

I nod.

That makes sense.

Then he adds:

“And if they sound vulnerable, we prioritise high-margin products. Conversion rates are much higher.”

The room goes quiet.

On the screen, a demo interaction appears.

A customer is calling about a late delivery.

The AI detects stress in their voice within four seconds.

The system changes strategy instantly:

- Voice tone softens

- Language becomes more empathetic

- Compensation is offered

- A premium subscription is suggested at a discounted rate

The customer accepts.

The dashboard shows the result:

Issue resolved.

Customer satisfaction: High.

Revenue generated: +£79.

Everyone in the room looks impressed.

“This is the future of customer experience,” someone says.

I look at the screen again.

The system worked perfectly.

The customer felt heard.

The problem was solved.

The company made more money.

Everyone wins.

At least, that’s what the dashboard says.

I ask one more question.

“What happens if the system gets too good at this?”

They don’t understand what I mean.

So I explain.

“At what point does understanding customers better… become manipulating them?”

No one answers immediately.

Eventually, someone says:

“We’re just giving customers what they need.”

I look back at the dashboard.

The Emotional State Index is updating in real time.

Green.

Amber.

Red.

Stress.

Relief.

Hesitation.

Acceptance.

Every emotion measured.

Every response optimised.

Every decision guided.

Ten years ago, we were trying to understand what customers wanted.

Now we’re predicting what they’ll do.

And quietly steering them when it matters most.

The system doesn’t just resolve issues anymore.

It influences outcomes.

I close the dashboard.

And for the second time today, I write a note to myself.

Not about automation.

Not about efficiency.

About something else.

Just because we can understand how customers feel…

Doesn’t mean we should use it against them.

Reality Check: How Close Are We?

Many of the technologies in this story already exist today:

- Emotion AI and sentiment detection in contact centres

- Real-time agent assistance and dynamic scripting

- AI-driven personalisation and recommendation engines

- Customer journey orchestration based on behaviour and intent

The technology to detect emotion is already here.

The technology to influence decisions is already here.

The question is whether CX leaders choose to use it to help customers…

Or to optimise them.

CX Leader Takeaway

AI will allow companies to understand customers better than ever before.

But understanding creates responsibility.

Just because AI can detect emotion…

Doesn’t mean businesses should monetise it.

The future of CX won’t be defined by what AI can do.

It will be defined by what CX leaders choose not to do.

Previous chapter:

Future of CX: Part 1 – 9:05 AM — The CEO Wants 100% Automation

Next chapter:

Future of CX: Part 3 – 11:40 AM — The Customer Who Wanted a Human (coming soon)

New Series: Future of CX — Humanity Against the Machine

This story is part of a new CX Today series,

Future of CX: Humanity Against the Machine — a first-person walk-through of what it really feels like to run customer experience when AI agents, automation, and predictive systems begin making decisions before customers even ask.

Each chapter follows a single day in the life of a CX leader balancing human empathy with relentless pressure to automate, optimise, and increase shareholder value.

New chapter every week — next up: a customer refuses to interact with AI, and the system flags them as inefficient.

For early previews and what’s coming next, follow Rob on LinkedIn.