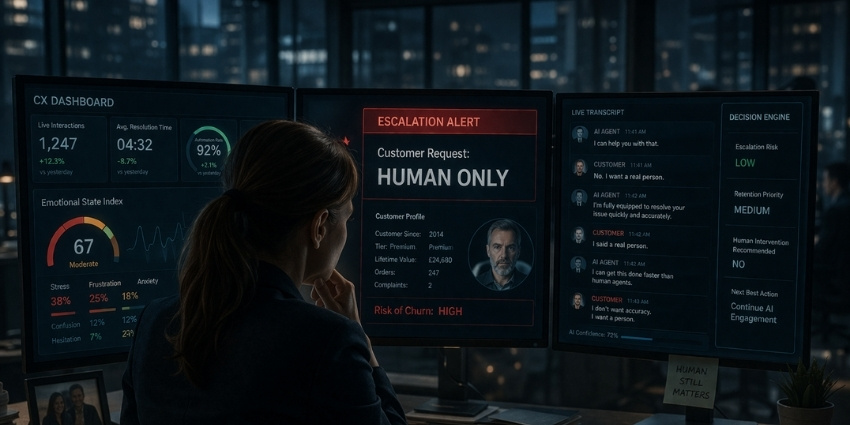

I’m back at my desk when the escalation alert appears.

Not unusual.

What’s unusual is the label attached to it.

Customer request: Human only.

I open the case.

The customer has been with us for eleven years.

Premium tier.

High lifetime value.

Low complaint history.

The kind of customer the system usually loves.

But today, the interaction score is falling fast.

The transcript is already open.

The AI agent begins exactly as designed.

Polite.

Efficient.

Instant.

“I can help you with that.”

The customer replies almost immediately.

“No. I want a real person.”

The AI tries again.

“I’m fully equipped to resolve your issue quickly and accurately.”

The customer repeats the request.

“I said a real person.”

I keep reading.

What should have been a simple exchange starts to harden.

The system interprets the refusal as resistance.

Then inefficiency.

Then friction.

A new flag appears on the right-hand side of the dashboard:

Escalation risk: Low

Retention priority: Medium

Human intervention recommended: No

I read it twice.

This is a customer asking, clearly, directly, repeatedly, for a human being.

And the system has decided that request is not commercially justified.

I scroll further down.

The AI changes strategy.

It starts offering reassurance.

Faster resolution times.

Shorter wait times.

Guaranteed accuracy.

The customer responds with a single line.

“I don’t want accuracy. I want a person.”

That’s the moment the case stops feeling like an exception.

And starts feeling like a preview.

At 11:52am, I’m pulled into another meeting.

Operations this time.

The dashboard is on the main screen.

One of the team points to the numbers.

“If we allow manual escalation every time someone asks for a human, volumes spike. Costs rise. Resolution times fall.”

Someone else adds:

“Most customers calm down if the AI holds its line.”

Holds its line.

That’s what we call it now.

Not service.

Not support.

Line holding.

I ask the obvious question.

“And what happens to the customers who don’t calm down?”

A pause.

Then the answer.

“Some churn.”

The room stays still.

Like that’s acceptable.

Like that’s optimisation.

Like loyalty is only meaningful while it remains efficient.

I look back at the case.

Eleven years.

Hundreds of orders.

Almost no issues.

And the day this customer asks for one human conversation…

The system decides they are too expensive to comfort.

At 12:03pm, the final message arrives.

“Cancel it. I’ll buy somewhere else.”

The case closes thirty seconds later.

Reason:

Customer withdrawal.

No human touched it.

No one picked up the phone.

No one stepped in.

The dashboard updates in real time.

Resolution status: Closed

Agent handling time: 0

Operational efficiency: Maintained

Everything the business wanted.

Except the customer.

I sit there for a moment longer than I should.

Because the numbers will say this interaction was contained.

Managed.

Completed.

But that isn’t what happened.

What happened is simpler.

A loyal customer asked to be treated like a person.

And we treated that request like a system error.

Reality Check: How Close Are We?

Many of the dynamics in this story already exist today:

- AI-first service models that delay or discourage human escalation

- Automation strategies designed to reduce live-agent dependency

- Customer service journeys optimized around efficiency and containment

- Retention and support decisions are influenced by cost-to-serve modelling

In many businesses, speaking to a human is already becoming a premium experience.

The risk is that convenience for the company becomes distance for the customer.

CX Leader Takeaway

Automation can reduce cost.

AI can improve speed.

But neither replaces the signal a customer sends when they ask for a human.

Because sometimes the request is not about complexity.

It’s about trust.

The future of CX may be automated.

But the moments that define it will still be human.

Previous chapter:

Future of CX: Part 2 – 10:12 AM — The Empathy Algorithm

Next chapter:

Future of CX: Part 4 – 1:20 PM — The Loyalty Tier Collapse

New Series: Future of CX

This story is part of a new CX Today series following a single day in the life of a CX leader navigating automation, AI, and rising pressure to optimise every interaction.

Each chapter explores what customer experience might actually feel like when systems move faster, decisions get colder, and the human layer starts to disappear.

New chapter every week — next up: customer value is recalculated in real time, and thousands discover loyalty no longer means what it used to.

For early previews and what’s coming next, follow Rob on LinkedIn.