Microsoft WorkLab’s latest research makes the market split hard to ignore. Microsoft surveyed 500 enterprise decision-makers across 13 countries and 16 industries, then grouped companies by readiness to deploy agents. ‘Achievers’ score high on both strategy and execution, while ‘Discoverers’ score low on both and tend to remain stuck in pilots longer.

Microsoft says Achievers expect to scale roughly 2.5 times faster than Discoverers, and it argues the gap is widening as agent adoption accelerates.

For CX leaders, this is not just a productivity story. It is a quality and trust story. Agents will touch customer journeys, case resolution, billing, onboarding, and knowledge. If the foundations are weak, the automation doesn’t just move faster, it can spread errors faster.

This is also why readiness is becoming an operating model issue, not a tooling debate. As enterprises buy ‘intelligence on tap,’ they need a way to govern it, allocate it, and make it accountable, just like any other critical resource.

In Microsoft’s Work Trend Index 2025, Karim R. Lakhani, Professor at Harvard University, argues that as AI democratizes expertise, enterprises will need new internal functions to manage and govern that capability:

“As AI democratizes access to expertise and intelligence, we’ll see the rise of Intelligence Resources departments, much like how HR and IT evolved into core functions… emerging as a critical source of competitive advantage in the AI-enabled enterprise.”

Karim’s point is strategic, but it lands in a very practical place. If ‘intelligence’ becomes a managed enterprise resource, then leaders need a repeatable way to distribute it into real workflows, and a way to make teams trust it enough to use it under pressure. That’s where AI agent readiness stops being abstract and starts showing up in how work actually gets done.

The same report highlights Supergood as an example of ‘expertise on tap.’ In that context, Mike Barrett, Chief Strategy Officer at Supergood, describes how agent-driven work changes who gets access to strategic thinking:

“We don’t need a strategist on every brief. Everyone at Supergood has access to that expertise via our platform.”

The Readiness Divide: Achievers, Discoverers, And Everyone In Between

Microsoft WorkLab frames readiness as a two-axis reality: strategy and execution. That distinction matters because enterprise AI programs often over-invest in vision and under-invest in operational readiness. Others do the reverse, rolling out tools without clarity on where agents should drive measurable outcomes.

Microsoft’s segmentation highlights four profiles: Achievers (high strategy and execution), Visionaries (high strategy, low execution), Operators (low strategy, high execution), and Discoverers (low on both). Microsoft also notes a practical speed gap. Companies with grand strategies but weak operations average at least nine months to deploy, while top performers report under six months.

The most useful takeaway is also the most deflating: Microsoft says what separates Achievers is not budget or technical firepower. It is preparation.

The research also points to five core capabilities shaping readiness, spanning strategy and execution. They include business and AI strategy alignment, business process mapping, technology and data foundation, organizational culture and readiness, and security and governance.

This is the part many leaders want to skip because it looks like internal housekeeping. But Microsoft’s message is clear: agents compound value only when the enterprise is structured enough to support them.

Agent deployments also fail for a reason leaders often underestimate: the organization tries to install a new way of working without doing the human work that makes it stick. AI agent readiness means redefining who owns decisions, where escalation happens, how exceptions are handled, and how teams build trust in outputs that can change minute by minute.

In Microsoft’s Work Trend Index 2025, Amy Webb, CEO at Future Today Strategy Group, ties agent adoption to organizational reality, not tools. She warns that readiness failures usually start with people, not models:

“If you have a people problem, you will have an AI problem. As multi-agent systems redefine the workplace, the challenge will be to integrate and manage them securely and effectively.”

That warning is a readiness checklist in disguise. If teams don’t have clarity, training, and safety rails before rollout, agents won’t compound value, they’ll compound confusion.

The ‘Process Debt’ Trap, And Why Pilots Get Stuck

Microsoft WorkLab provides a statistic that should make any enterprise transformation leader pause: based on its 500-respondent survey, only 22% ‘strongly agree’ their organization has documented key processes and data dependencies. That gap is a blueprint for stalled scale.

When workflows are not documented, agents operate without context. They can optimize the wrong outcomes, mishandle exceptions, or create new bottlenecks that teams can’t diagnose because the underlying process was never mapped. That is ‘process debt,’ and agents will inherit it.

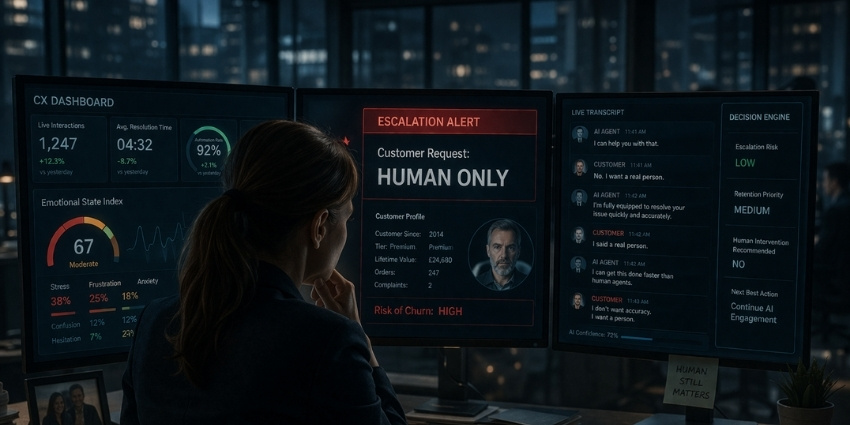

The process debt problem is larger than productivity. In CX environments, it can show up as inconsistent answers, incorrect routing, repeated requests for customer information, and escalations that rise rather than fall. If a workflow is unclear for humans, it will not become clearer just because an agent is operating inside it.

Process mapping is not enough if data is fragmented. Microsoft WorkLab reports that nearly 80% of organizations say they can’t share data across teams in ways that make agentic AI work, and it also reports 80% of leaders say data isn’t accessible across teams. Either way, the implication is consistent: agents can’t deliver reliable outcomes when they can’t see the full state of the business.

Data readiness is also about ownership. Microsoft notes that, on average, only one in four organizations strongly agrees it has clearly defined owners responsible for keeping knowledge sources current and reliable. That is a major risk when agents are expected to make decisions across systems.

AI Agent Readiness Is Also A Change Management Test

Microsoft’s research also points to a talent gap that will slow adoption even when technology is ready. It reports that an average of 17% of companies strongly agree they have a clear talent strategy that defines future jobs, roles, and skills for an AI-driven business. Among Achievers, Microsoft says 50% are already reimagining roles and career paths for an AI-first business, while among Discoverers it is essentially zero.

It also highlights change management as a decisive differentiator. Microsoft reports that 56% of leaders in top firms strongly agree to having solid plans to help employees adapt, compared to 4% of slower adopters.

In practice, this determines whether agents become a daily operating layer or a novelty. If teams don’t trust the outputs, don’t understand escalation paths, or fear displacement, adoption becomes superficial. Workarounds become normal. Leaders then misread the situation as ‘the tech didn’t deliver,’ when the real failure was readiness.

That’s the moment when the conversation has to shift from ‘what the agent can do’ to ‘how the team works with it’. Readiness means designing collaboration patterns, escalation paths, and human accountability, so agents are treated like part of the operating rhythm.

Conor Grennan, Chief AI Architect at NYU Stern, put it simply:

“The unlock is when we realize it’s not a tool but a new kind of team member.”

Compliance And Liability, When Agents Take Action, The Risk Model Changes

The more autonomy we give agents, the more governance stops being a box-check and becomes a prerequisite for safe scale.

The Liability Gap

Clifford Chance warns that agentic AI changes the nature of technology risk because these systems don’t just generate insights, they take actions, make decisions, and can operate without human oversight. It also flags a ‘liability gap’ emerging as businesses deploy agentic capabilities under legacy contracts written for passive software.

Clifford Chance is clear about where risk can land. In many tech agreements for agentic AI, suppliers disclaim accuracy, reliability, and fitness for purpose, and warn outputs shouldn’t be relied on. With agents, that disclaimer extends to actions, so if an agent misprices a product, misroutes payments, or sends the wrong customer message, the customer may carry the liability.

The damage is also often the kind contracts cap or exclude. Clifford Chance points to common carve-outs like loss of profits, loss of data, and consequential or indirect damages, with liability frequently capped at fees paid. But agent failures can drive those exact harms at scale, from compliance fines and operational disruption to reputational churn and data loss.

They also flag a separate gap: many legacy agreements lack clear rights to explainability, logs, and oversight, even though customers still have to justify agent behavior to regulators, auditors, customers, or courts.

The Agentic AI Revolution

Squire Patton Boggs makes a related point through a legal-risk lens about the expansion of agentic AI in enterprise software, plus the need for controls such as human sign-off for material decisions, logging, circuit breakers, and clear internal accountability.

The report argues the risk model shifts when agents move from generating content to taking actions across systems. “Black box” decisions can be hard to trace, and that creates legal exposure, from employment discrimination claims to negligence when customers rely on wrong outputs. For AI agent readiness, safe scale means being able to explain why an agent acted, what data it used, and what controls governed it.

Its mitigation playbook aligns with readiness work teams can start now: clear internal ownership (an AI officer or equivalent), human sign-off for high-impact decisions, technical guardrails like circuit breakers or kill switches, and strong logging and monitoring to prove oversight and respond fast when agents fail.

In readiness terms, these controls shouldn’t be bolted on after rollout, they should be designed into the workflow before agents are granted authority to spend money, trigger customer communications, or change records of truth.

The Bottom Line: You Can’t Buy AI Agent Readiness

For CX leaders, this matters because the first high-profile failures of agentic systems may not come from model quality. They may come from unclear responsibility when an agent approves something it should not have approved, discloses something it should not have disclosed, or acts based on incomplete context.

AI agents can absolutely drive real value, and the trendline says they’re arriving quickly. Microsoft’s 2025 Work Trend Index reports that 81% of leaders expect agents to be moderately or extensively integrated into their company’s AI strategy in the next 12–18 months, while adoption remains uneven on the ground.

That is exactly why readiness is turning into competitive separation. The companies that map workflows, unify data, redesign roles, and lock in governance will move faster, and they will also move safer. Everyone else will be running pilots that look impressive in isolation but collapse when they touch real enterprise complexity.

And in CX, that complexity is the work.

Join the conversation: Join our LinkedIn community (40,000+ members): https://www.linkedin.com/groups/1951190/

Get the weekly rundown: Subscribe to our newsletter: http://cxtoday.com/sign-up