Cyara has announced new agentic testing and AI governance capabilities to ensure AI agents deliver consistent and reliable interactions once deployed into customer service.

These tools are designed to validate and monitor AI behavior in both voice and digital channels, both before and after customer interactions.

This move supports Cyara’s wide goal to address the gap between projected benefits of agentic AI and current customer perceptions of its performance.

Sushil Kumar, CEO at Cyara, argues that enterprises can only deploy AI agents responsibly if they can verify ahead of time that those agents perform correctly, follow regulations, and avoid bias, and that Cyara’s new capabilities provide that assurance.

“Every enterprise wants to deploy AI agents in their contact center. The ones who actually will are the ones who can prove those agents work, before customers find out they don’t,” he explained.

“We built these capabilities because the level of assurance has to match the level of autonomy.

“If you’re putting an AI agent on a live customer call, you need to know it will handle the conversation correctly, comply with regulations, and not introduce bias. That’s what Cyara now delivers.”

The Trust Gap Facing AI in Customer Service

A Gartner study has revealed that agentic AI will autonomously resolve 80% of common customer service issues by 2029, with enterprises expected to see reduced costs and low-effort experiences for customers.

However, much research shows that many AI-driven customer service interactions are unable to meet these projected benefits.

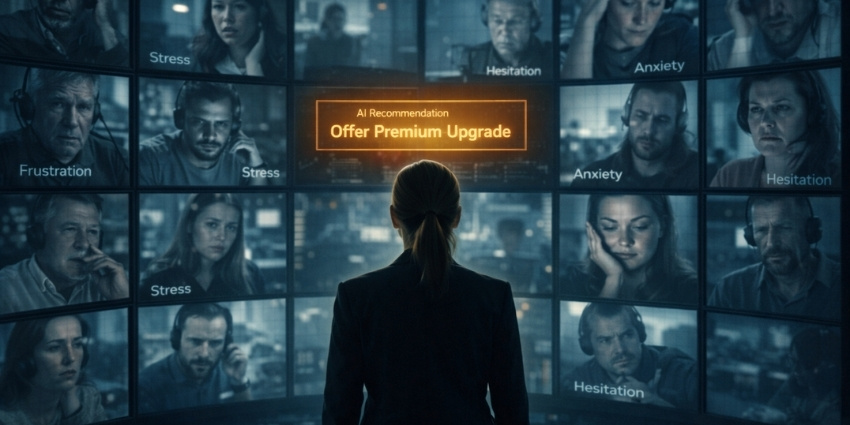

Customers are continuing to report negative interactions with AI and automation, as agents continue to lack empathy and context during conversations, leading to frustration and weakening customer loyalty.

Many organizations are continuing to experience inaccurate answers, inconsistent guidance, or hallucinated information because they don’t have strong governance to manage the AI’s movements.

According to the PEX Report 2025/26, 96% of CX leaders believe that AI is essential for workflows, but only 43% have a governance policy in place.

As customer demands begin to turn toward security, trust, and reliability, enterprises that operate without any proper governance in place can create customer doubt about the safety of their data.

This can include violations of disclosure rules, privacy requirements, or financial regulations, increasing risk with autonomous or agentic behavior that has not been checked before deployment.

Customers may also experience inconsistencies in interactions, where an AI can accidentally produce discriminatory or unequal treatments between customer groups.

Without proper governance, bias goes undetected and becomes a systemic risk.

Whilst CX leaders still believe their AI agents are consistently handling customer service tasks reliably, this creates a mismatch between AI’s anticipated capabilities and the current reality of customer interactions.

Cyara Targets Customer Trust in Agentic AI Deployments

In response, Cyara has provided three interconnected capabilities designed to support reliable and governed deployments of agentic AI across three CX environments within its AI Trust suite.

Agentic AI Testing for Voice and IVR

This system evaluates AI agents by using AI-driven test agents to simulate customer interactions across multiple scenarios.

By analyzing how the voice AI responds, handles branching logic, and behaves under changing inputs, this platform can compare expected outcomes with actual responses and flags any incorrect handling.

This process reveals failures that scripted test cases often miss as agentic systems typically adapt and vary in behavior.

Cyara’s testing approach identifies reliability gaps in AI voice systems before customers experience them to ensure speedy resolutions, reducing the risk of faulty dialogues, inconsistent responses, or failures in adaptive logic.

Compliance and Bias Modules

These two modules review transcripts and real-time interactions from AI agents to identify risks, examining what the AI said, what it recommended, and how it interpreted user inputs, flagging issues that might affect fairness, safety, or legal adherence.

The Compliance module compares AI-generated outputs against internal rules and policies, whilst the Bias module measures variation in outcomes across different customer types or scenarios, identifying patterns that may indicate unfair or skewed outcomes.

When failures in compliance or biases quickly undermine customer trust, these modules identify risks early, helping organizations prevent inconsistent or harmful outcomes before they reach customers.

Recommendation Engine for Prompt Design and Test Development

The engine analyzes the AI agent’s purpose, the target journey, and the testing goals, generating prompt structures that encourage specific behaviors and proposes ways to combine fixed scripts with adaptive agent testing.

This helps teams create test cases that reflect real customer behavior, rather than narrow scripted paths, and can produce better evaluations of how an agent will perform in production.

When enterprises struggle to test adaptive AI systems as traditional test design depends on predictable outputs, the recommendation engine reduces this barrier, speeding up test creation and improving coverage to reveal weak points in agentic behavior before customers encounter them.

Creating More Predictable AI-Powered CX

These combined capabilities allow enterprises to access a more reliable route of introducing autonomous AI into customer interactions.

Whilst agentic systems that adapt, make decisions, and generate responses in real time creates new opportunities for efficiency, this also increases the risk of unpredictable outcomes.

Cyara’s solution provides continuous validation and governance across these adaptive behaviors, creating visibility into how AI systems operate, respond under varied conditions, and comply with policy and fairness requirements.

It also supports a controlled transition from human-led or scripted workflows to autonomous ones. This helps protect customer trust while organizations scale AI.

By ensuring higher predictability, consistent experiences, and accurate information, this solution supports safer and more trustworthy interactions.